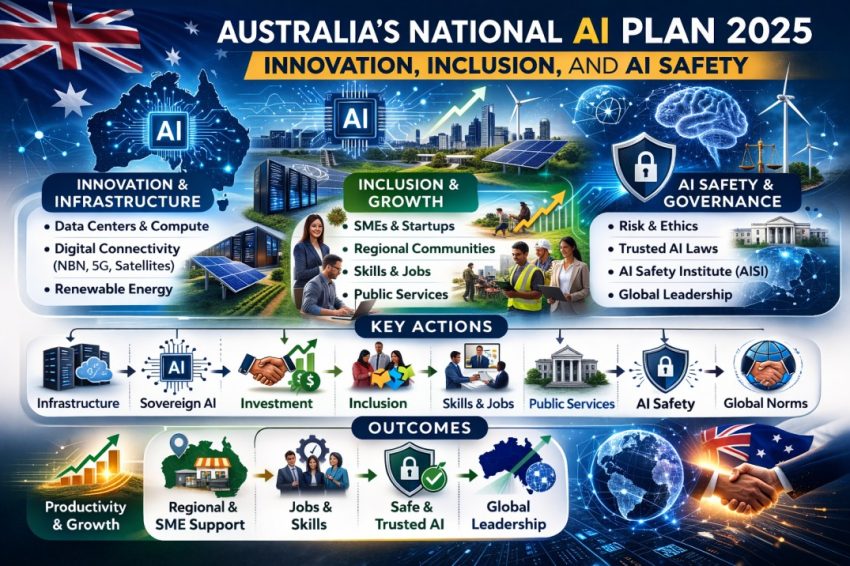

The Australian National AI Plan 2025 (NAP) adopts a whole-of-economy and whole-of-society approach. This NAP has been issued by the Australian Government Department of Industry, Science and Resources. Through this NAP, it ensures that AI will drive productivity and innovation and benefit all Australians. The NAP will ensure that only big tech companies or metropolitan areas will not benefit in zero-sum manner. The NAP operates within strong legal, ethical, and safety boundaries. The three core goals of the NAP are capturing opportunities, spreading benefits, and keeping Australians safe.

Core Goals

The first core goal states the ambition to make Australia a regional AI and data infrastructure hub. This can be achieved by strengthening domestic AI capability rather than relying solely on the consumption of foreign AI. Further, it aims to attract both global and domestic investment in AI and data centers.

Second, the plan has the objective of spreading benefits by ensuring that AI gains are shared fairly across SMEs and regional and remote communities. In addition, the NAP aims to include the public sector, women and people with disabilities including digitally excluded groups for sharing these benefits. The document notes that achieving this, requires improvements in productivity, wages, job quality, as well as public services.

Third, the document emphasizes the safety of Australians by managing AI risks using existing laws. This can be implemented by building public trust through responsible AI practices. The NAP also expresses the ambition to lead globally on AI safety and governance.

Key Actions

The NAP outlines a total of nine action strategies. The initial approach is comprised of constructing smart infrastructure for the extension of data centers, enhancing computer capacity, and widening digital connectivity. This also involves enhancing the NBN, mobile networks, satellites and submarine cables. Further, the plan proposes developing national data center principles by focusing on sustainability, energy, and water use. This can be ensured through aligning AI growth with the renewable energy transition. This will be supported by mapping national compute capacity to identify gaps.

The document shows that scaling AI is only possible with efficient compute power, connectivity, and energy security. It focuses on high-value sector-specific AI in the areas like health, agriculture, resources, and manufacturing. This can be achieved through investment in sovereign AI for government through the GovAI platform, as well as supporting locally developed AI models. This is to ensure that it reflects culture, language, and data security. In addition, it highlights the importance of responsibly unlocking high-value public and private datasets. In addition to funding AI R&D, startups, and commercialisation through initiatives such as the CRC AI Accelerator. Through this approach, Australia envisions itself as an AI producer and exporter rather than only a user.

The plan also promotes Australia as a stable and trusted AI investment destination. This can be ensured through rapid coordinated approvals for large AI and data center projects. According to the document, Australia can leverage superannuation funds and foreign direct investment to support this objective.

Addressing Barriers

The scaling of AI adoption through supporting SMEs and not-for-profits is vital. Further addressing first nations businesses as well as limiting metro–regional AI divide is must. Further, expanding AI Adopt Program under National AI Centre is essential. This could be implemented through practical AI adoption guidance, templates, and advisory services while ensuring Indigenous Data Sovereignty. Mainly, the document points out that the primary obstacles to AI adoption are the gaps in awareness, skills, and access and that it is geared towards solving these problems. To equip and train Australians, AI and digital literacy need to be a part of both education and the workforce.

This is to be realized by retraining instead of substitution. In addition, protecting workers’ rights in AI-enabled workplaces and ensuring transparency in AI-based hiring, rostering, and performance management are regarded as essential under the NAP. Key tools to build an AI-enabled workforce include TAFEs, micro-credentials, and VET reform, as well as the Next Generation Graduates Program and union–employer co-design.

Improving public services is another priority and can be achieved by using AI to deliver faster services, personalised support, and better policy outcomes. This includes deploying AI responsibly through GovAI and integrating AI pilots in areas such as healthcare, education, veterans’ services, and environmental monitoring, while ensuring that human oversight remains mandatory for decision-making.

The NAP also relies on technology-neutral existing laws as the foundation for mitigating harms and addressing risks such as bias and discrimination, deepfakes and online abuse, privacy breaches, and AI-enabled crime. Further, reviewing AI implications in consumer law, healthcare, copyright, and privacy law is considered essential.

The NAP reveals the plan for the partnerships on global norms. The main motivation for global partnership is to shape global AI governance. This will be an advantage in establishing Australia as a reliable middle power. Engagement in worldwide forums like the UN Global Digital Compact, G7, OECD, GPAI and Indo-Pacific digital partnerships are part of this effort. These initiatives seek to harmonize local regulations in line with international standards while at the same time getting ready for future risks such as super AI and AGI.

The proposed plan to establish an AI Safety Institute (ASI) for building independent capabilities to monitor AI risks, testing emerging AI systems, and advising regulators and ministers is appears to be well thought out. The ASI will focus on upstream risks (model capabilities) and downstream harms (real-world impacts), in coordination with international AI safety bodies.

Conclusion

At last, Australia’s National AI Plan focuses on building AI capability domestically while sharing its benefits fairly. This can be ensured through governing AI risks responsibly while positioning Australia as a trusted global AI partner.